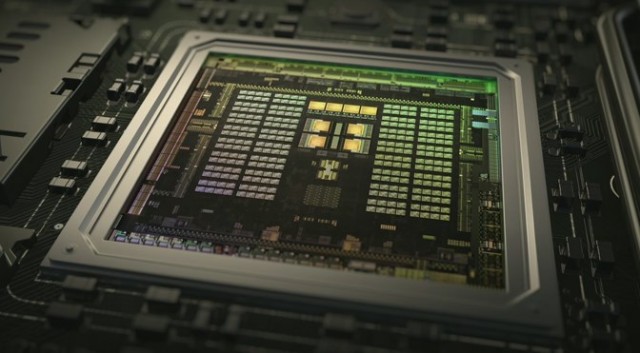

NEW YORK: Nvidia kicked off CES with a bevy of new announcements, and a fundamentally new product push. A year ago, the company launched the first fully programmable mobile GPU with DX12 support, based on its Kepler desktop GPU. Today, Nvidia is launching the Tegra X1 — an eight-core SoC in a 4×4 configuration with a 256-core Maxwell-based GPU, H.265 support, and full VP9 decode.

This is a substantial improvement over the current Tegra K1 on a number of fronts. Tegra X1 will offer 33% more cores than the current K1, alongside Maxwell’s superior performance-per-watt, power consumption, and bandwidth-saving capabilities. It’ll be the first mobile GPU to support 16-bit floating point numbers, which gives it an enormous theoretical performance increase over its predecessor.

Nvidia is arguing that Tegra X1 will be far more power-efficient than any previous version of Tegra, and that makes sense based on what we’ve seen from Maxwell. Kepler set records for power efficiency when it debuted, but Maxwell has further improved on those records. The company has also pulled off a fast introduction — it took nearly two years to push out Tegra K1 after the first GTX 680 shipped, but Maxwell is debuting in mobile slightly more than a year after the GTX 750 Ti first launched. Nvidia’s CEO, Jen-Hsun Huang, gave the time line as four months, but the first Maxwell-class hardware debuted at the beginning of 2014.

The point of Drive CX is to serve as a development platform for Nvidia’s Drive Studio and a future in which the entire cockpit is virtualized and displayed as rich applications across multiple displays. The problem with this approach is that while it might sound great, physical inputs and tactile response are actually critically important when driving. Many of the criticisms of MyFord Touch and similar systems have revolved around the fact that the all-touchscreen idea simply doesn’t work.

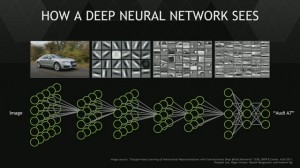

Nvidia, however, isn’t just talking about the idea of building rich displays and high-end monitors inside the car — it wants automotive companies to use its hardware to build multiple camera analysis systems and use that information to create the next-generation of self-driving cars.

I’m not going to comment on what Nvidia is calling “deep neural net” technology because that’s a term that gets hellaciously misused by marketing departments and it’s not clear exactly what Nvidia is referring to here. Jen-Hsun’s keynote talks about using a neural net approach to train a chip to differentiate what a pedestrian is (or isn’t), and to make the vehicle more situationally aware. These are the kinds of approaches that must improve if self-driving car concepts are ever going to fruition.

Will those advances arrive on the back of Tegra X1? It’s hard to say. Car manufacturers have typically lagged mobile SoC developers by years. Long upate cycles and very different product needs have resulted in relatively poor adoption of cutting-edge technology. Nvidia’s partner Audi, who has worked with them on multiple CES shows and concept cars, came up on stage and cooperated with several demos, including surround view cameras, fully digital cockpits, and all-digital control systems. Clearly this is part of a long-term strategy for Nvidia and likely the beginning of a five-to-10 year automotive roadmap. The liveblog was built around demos like Audis concept car automatically parking itself, detecting its surroundings, and navigating various obstacles. Many of these are challenges that Google has also been facing, but Nvidia thinks its own technology and the GPU at the heart of X1 presents a potential solution.